There are many different ways to organize caching. Consider the main. NGINX acts everywhere as a web server. In some cases, it can be Apache. I do not dwell on how to implement this or that option, these schemes can be considered as a cheat sheet. It is important to note that traffic is everywhere from anonymous users.

All schemes are applicable not only to Drupal, but also to other solutions, for example, to Wordpress or to arbitrary PHP applications.

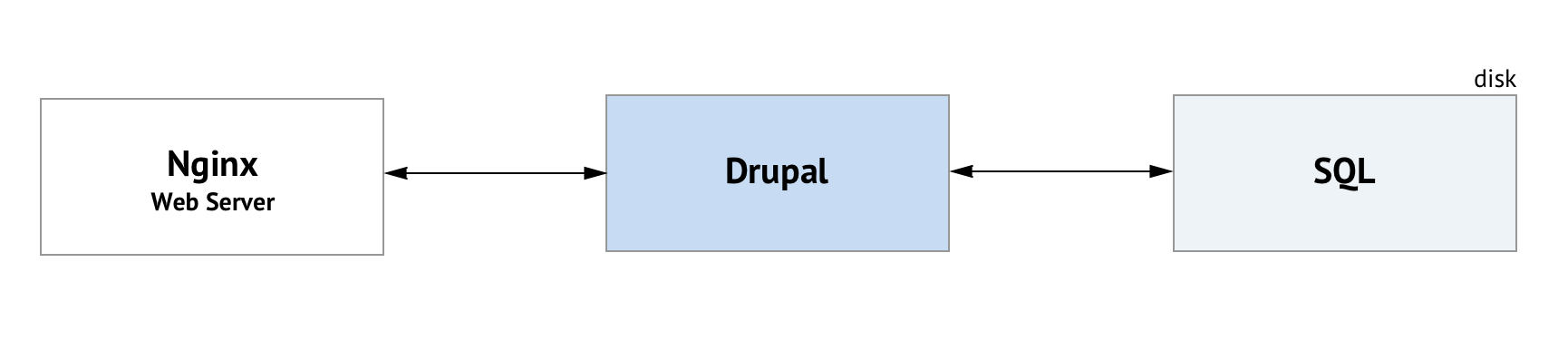

«Out the Box» variant

Normal Drupal caching, cache tables are stored in the SQL database.

Standard Drupal Cache

With each request from the user, the PHP application is launched, in our case Drupal.

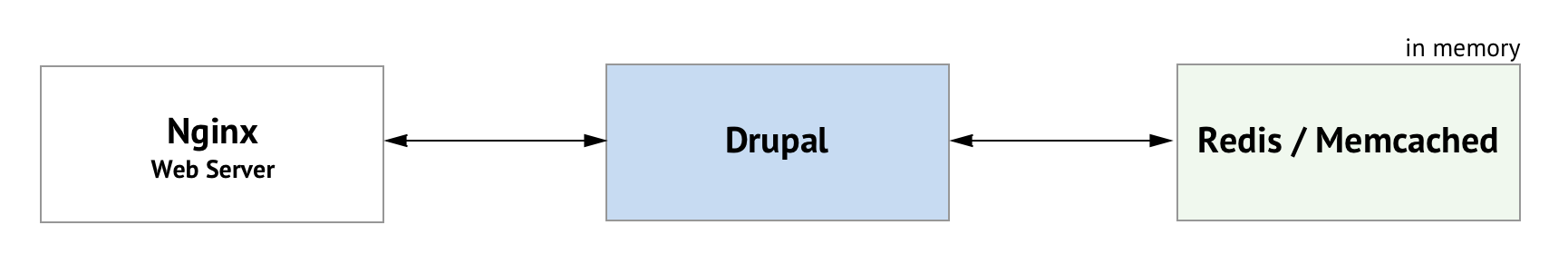

Redis or Memcached as a cache backend

Redis or Memcached is installed on the server. The standard Drupal cache is moved to one of these stores. Drupal modules: Memcache Storage or Memcache API and Integration, Redis.

Cache data is stored in memory. It's faster than the default caching in the SQL database. Drupal starts with each request.

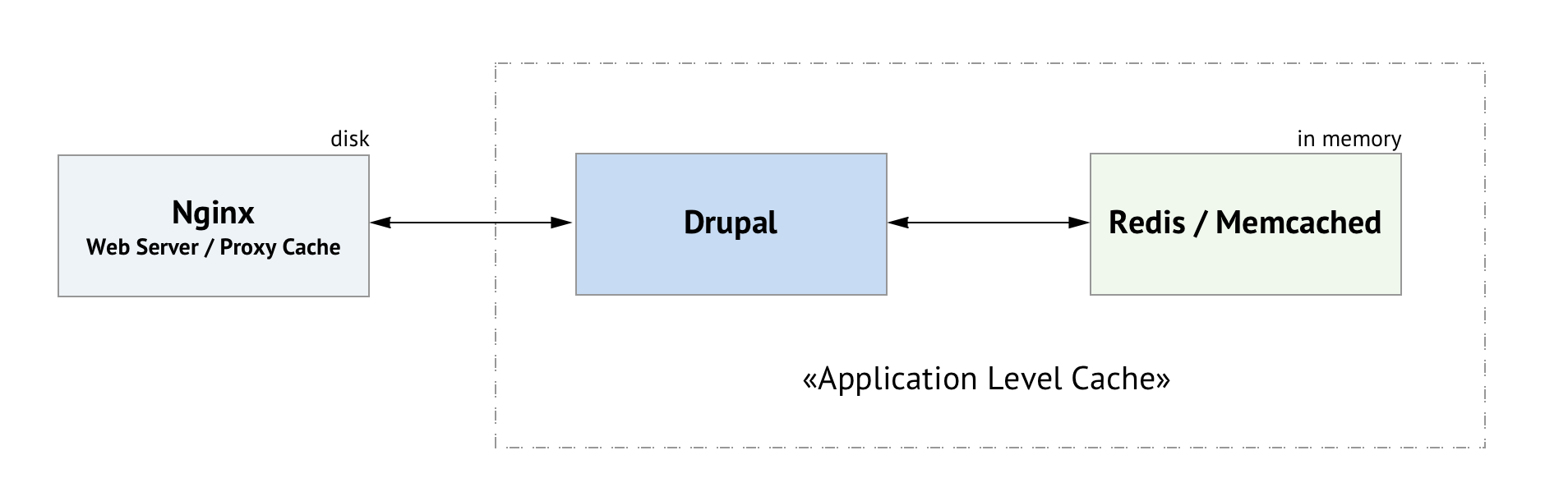

NGINX as a caching proxy

Let's call the bundle Drupal + Redis / MC for ease Application Level Cache. We assume that this is a cache at the application level.

NGINX disk cache and Drupal with in memory cache

NGINX is at the entrance. It is a web server and a caching proxy server. NGINX caches full pages and saves them to disk. When a user requests, NGINX gives the page from its cache (if it is there) without accessing Drupal.

Disadvantages:

The problem with the cache invalidation ("cleaning cache"). You need a paid Nginx Plus or your own solutions. It is possible to use the ngx_cache_purge module.

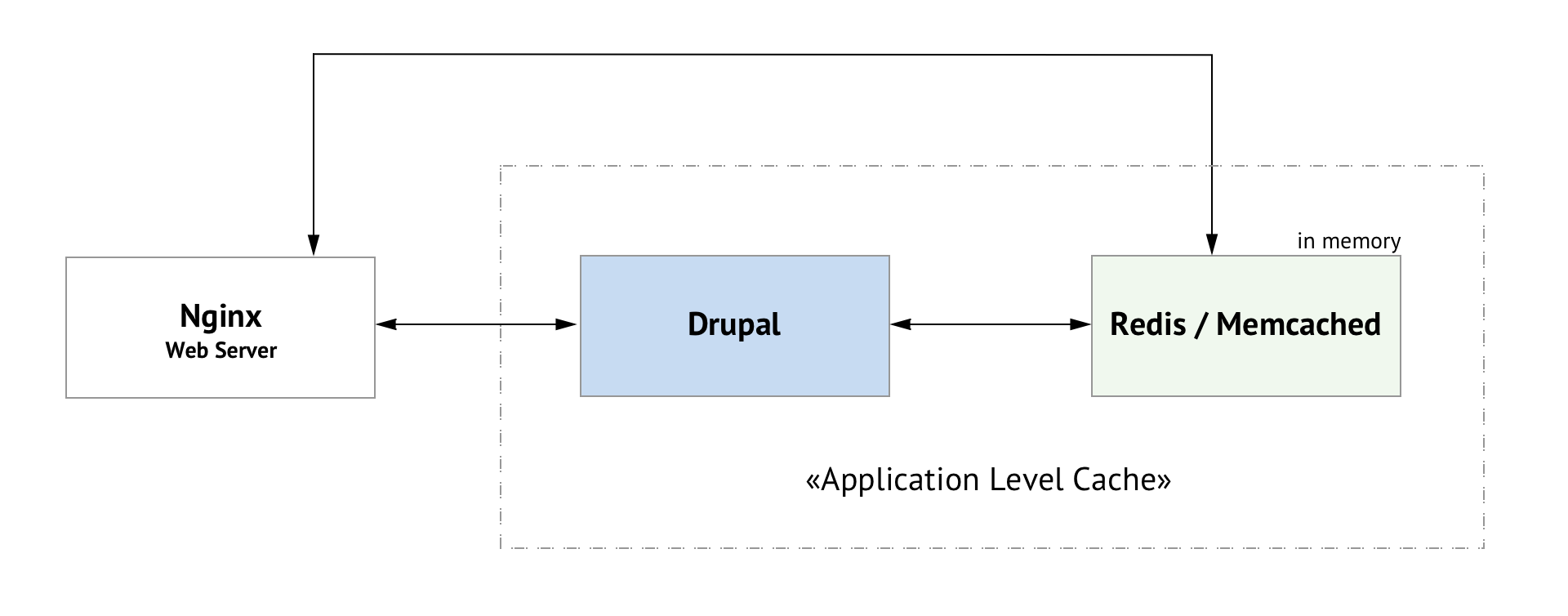

NGINX works directly with Redis / Memcachaed

One of the most attractive options because there are no extra components and the cache is fully managed by Drupal.

NGINX can read data from the PHP application cache

NGINX is configured in such a way that it is able to read data directly from the Redis/MC, which is managed by Drupal.

Varnish

This is a popular option. Varnish is cool and fast.

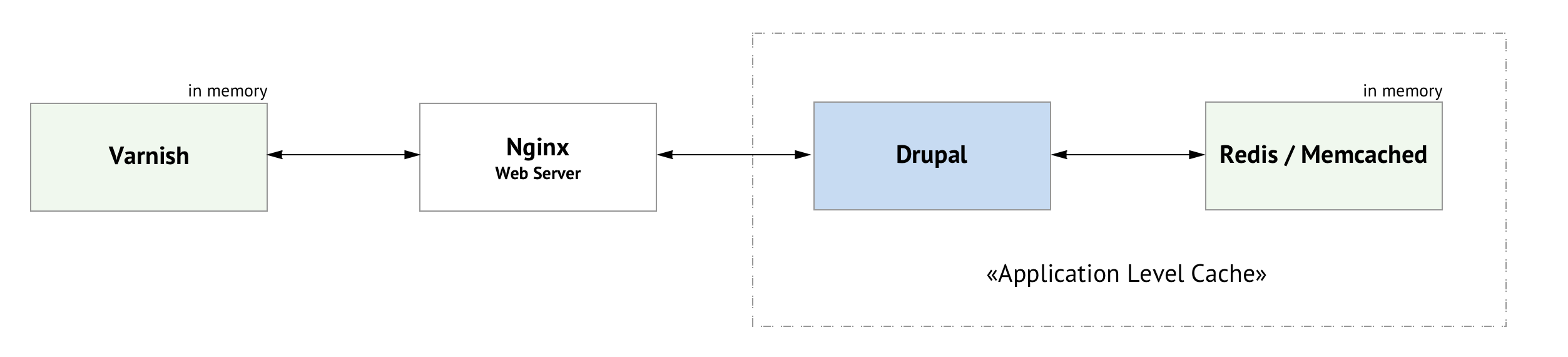

Caching with NGINX and Varnish

Now we have one more component — Varnish with its not very simple configs. It's not very nice. But the main disadvantage of the scheme — Varnish doesn't support SSL, HTTPS will not work here.

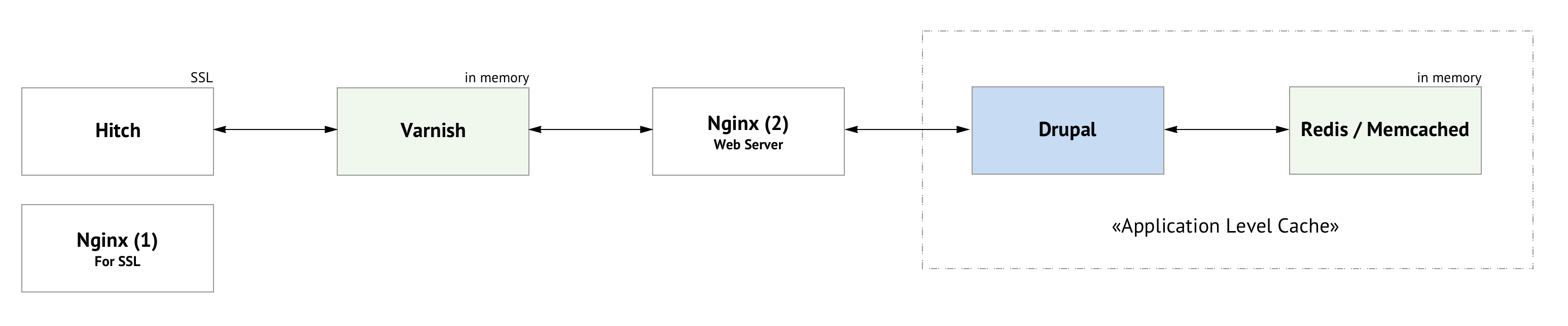

Varnish + Hitch (or NGINX) for SSL

For SSL support, you can use Hitch, this is software from Varnish Software, handles TLS / SSL and sends traffic to the backend. Or, you can use NGINX before Varnish as an HTTPS traffic handler.

Varnish with HTTPS traffic support

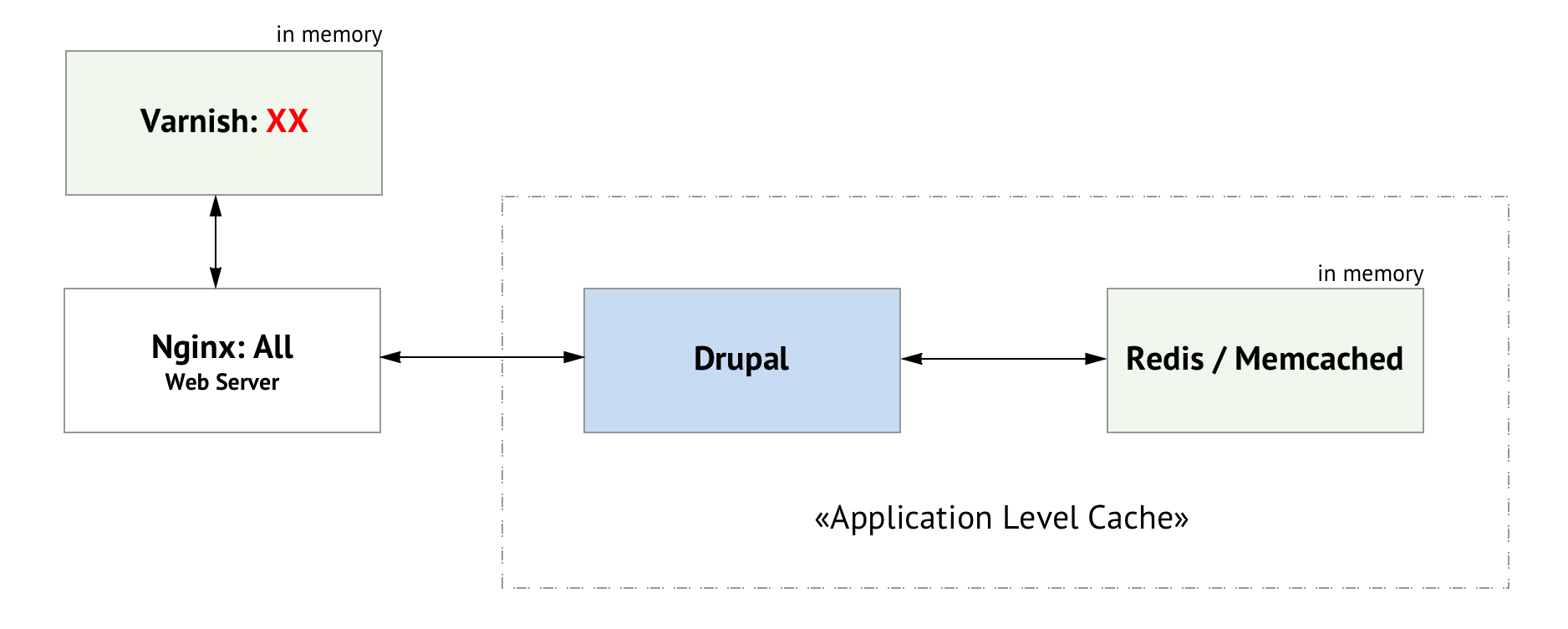

For simple projects you don’t want to have a lot of components. I guess for complex too. Consider the option: one NGINX + Varnish with HTTPS traffic support.

Varnish + one NGINX and SSL support

General scheme:

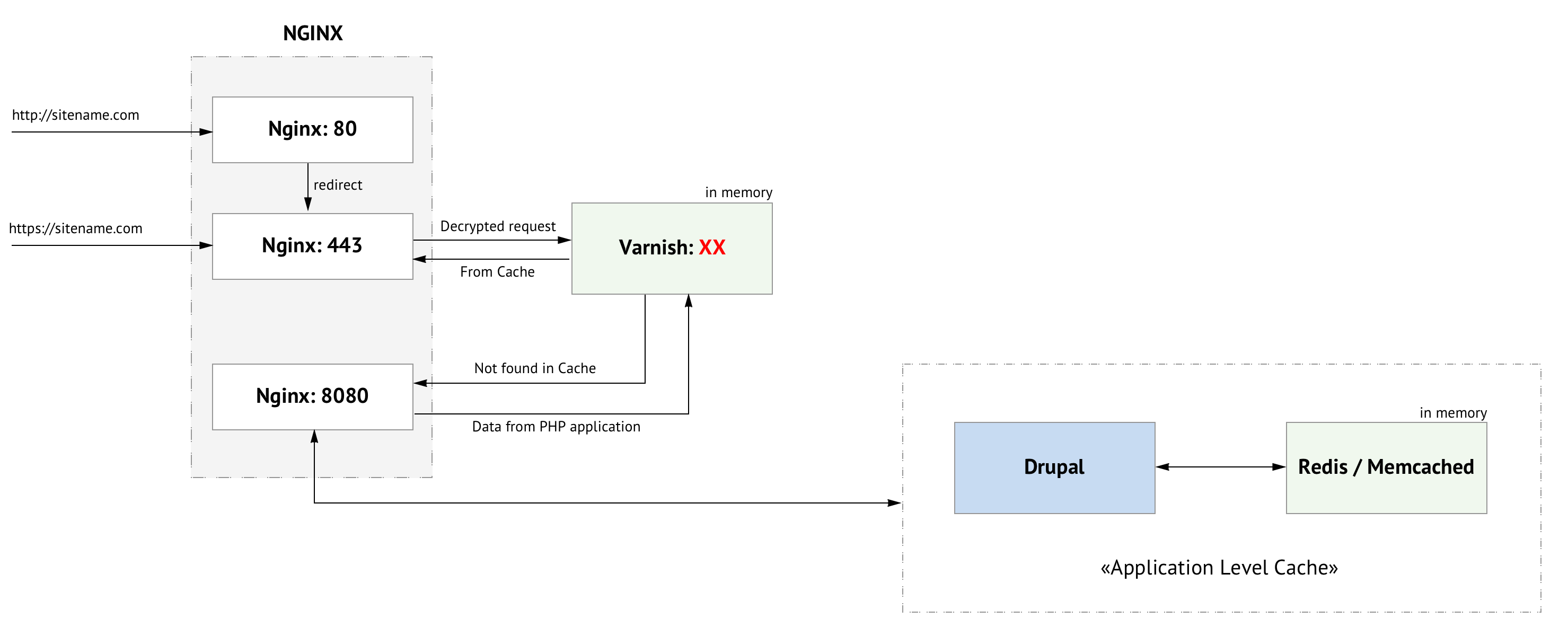

More detailed:

NGINX handles multiple ports. On port 80, there is a redirect to HTTPS. Requests to port 443 are decrypted and sent to Varnish, which must be configured on port XX (any selected port, up to you). Varnish checks if it has a cache for the given request. If there is, he gives the result. If not, proxies back to NGINX on port 8080, on which the PHP application must be configured on NGINX.